Feb 2021 - Aug 2021

:: Software Contractor Part-time

#fpga

#verilog

#systemverilog

#kernel-development

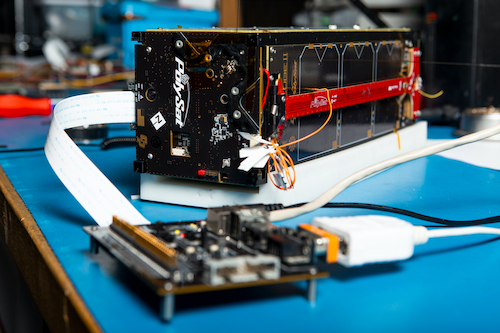

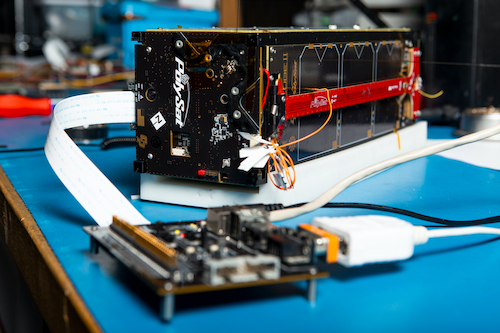

#pcb design Development high-speed FPGA interface for a TDC application.

Sep 2018 - Present

:: Software Developer Part-time

#python

#c/c++

#image processing

#OpenCV

#DevOps

#databases

I originally interned at LLNL over the summer of 2017, and joined part-time during university to support the software side of the project longer-term. I am continuing to develop vision algorithms for scientific measurements, but I am also working on streamlining our team’s workflow by using the latest workflow automation tools like Dagster.

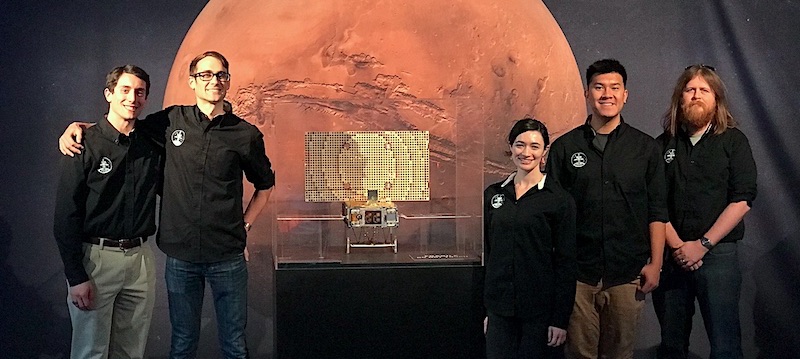

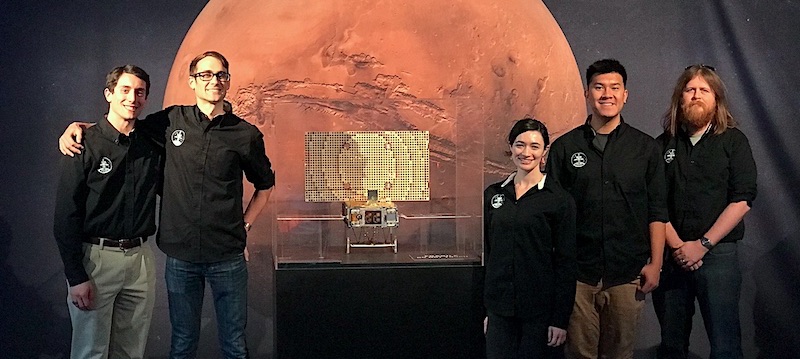

Nov 2016 - Jul 2020

:: Lab Manager • Ground Station Lead • Electrical Engineer

#pcb design

#embedded systems

#microcontrollers

#cubesat

I designd flight hardware and software for CubeSats, developed hardware and software interfaces with testability in mind, and led the lab through a sprint where the lab was producing multiple flagship missions at once.

Jan 2017 - Jun 2020

:: Undergraduate Researcher

#c/c++

#autonomous vehicles

#image processing

#OpenCV

#python

#machine learning

#object detection I developed image processing pipelines for autonomous vehicles (AVs) and architected an extensible simulator for AVs using Microsoft AirSim and Unreal Engine.

May 2018 - Nov 2018

:: MarCO Flight Operations Intern

#python

#js

#node

#databases

#cubesat

#data visualization

I interfaced the JPL operations team with the operations team at Cal Poly, in addition to my day-to-day duties which include drafting and verifying uplink products, analyzing telemetry, and developing data management tools for streamlining and automating spacecraft operations.

Jun 2017 - Sep 2017

:: HEDP Program Summer Intern

#python

#opencv

#computer vision

#image processing

#hpc

I interned at LLNL working on the Film Scanning and Re-Analysis Project. My research project was to design a semi-automated image processing pipeline to measure the size of the shockwave from a nuclear blast. The pipeline I developed provides was developed in Python using the OpenCV library and can detect and accurately measure the shockwave and fireball with minimal user input.