Sep 2018 - Present

:: Software Developer Part-time

#python

#c/c++

#image processing

#OpenCV

#DevOps

#databases

I originally interned at LLNL over the summer of 2017, and joined part-time during university to support the software side of the project longer-term. I am continuing to develop vision algorithms for scientific measurements, but I am also working on streamlining our team’s workflow by using the latest workflow automation tools like Dagster.

Sep 2020 - Dec 2020

:: Speech Recognition and Understanding Semester Project (CMU 18-781)

#python

#machine learning

#deep learning

#speech recognition

ASR is used heavily in eyes-busy or hands-busy situations and often time the user may be speaking over noise. My peers and I are particularly interested in how music effects ASR decoding. We use several music datasets of varied genre or broken-down instrumentation to allow us to perform in-depth anaysis of how different aspects of music influences speech recognizer’s performance. We then train a new model from what we have learned to see if we could improve the original model’s performance.

Oct 2020 - Dec 2020

:: How to Write Low Power Code for the IOT Semester Project (CMU 18-747)

#c/c++

#embedded systems

#python

#algorithms

The USGS measures stream flows since they are critical for long-term tracking and modeling/forecasting to ensure that federal water priorities and responsibilities can be met and that the nation’s rivers canbe effectively managed. Recently, the USGS has been looking at non-contact methods of collection this data which would allow USGS scientists to gather data more safely and possibly without even going in to the field. One such method is large-scale particle image velocimetry (LS-PIV). LS-PIV is a special case of algorithms that perform optical flow; at its is a cross correlation operation which is expensive in both time complexity and energy usage. My peers and I optimize the PIV algorithm to run on a 32-bit embedded microcontroller that can quickly and accurately perform the PIV computation and have a battery life of up to 2 years.

Sep 2020 - Dec 2020

:: Machine Learning for Signal Processing Semester Project (CMU 18-797)

#python

#machine learning

#signal processing

#eeg

#ica EEGs are extremely interesting to the signal processing world as the signals from them are high dimensional and localized spatially, spectrally, and temporally. These signals are extremely low amplitude and artifacts appear in the measured signal due to normal human processes like blinking, breathing, and moving; or noise can appear due to the external factors such as line noise or sound in the room. My peers and I implement Viola’s ICA CorrMap procedure to denoise EEGs, but rather than retreiving EEG artifacts in-situ, we use Cho’s EEG dataset which separately records subjects in both rest states and performing various ‘noisy’ actions prior to a motor imagery trial. We use this data to try and denoise signals and try to devise a way to create a generalized artifact template that can be transferred from user to user.

Jan 2017 - Jun 2020

:: Undergraduate Researcher

#c/c++

#autonomous vehicles

#image processing

#OpenCV

#python

#machine learning

#object detection I developed image processing pipelines for autonomous vehicles (AVs) and architected an extensible simulator for AVs using Microsoft AirSim and Unreal Engine.

Sep 2019 - Mar 2020

:: Cal Poly Capstone & Northrop Grumman Collaboration Project (Cal Poly CPE350-450)

#c/c++

#python

#ros

#robotics

#computer vision The Northrop Grumman Collaboration Project (NGCP) is a project-club at Cal Poly that participates in engineering challenges posed by Northrop Grumman. The collaboration project is with Northrop Grumman, in addition to Cal Poly Pomona with whom the work for the engineering challenge is split with. The 2019 school year had a new mission which was a rugged-terrain rescue mission. My peers and I were tasked with with sensing and intelligence for one of their new vehicles.

May 2018 - Nov 2018

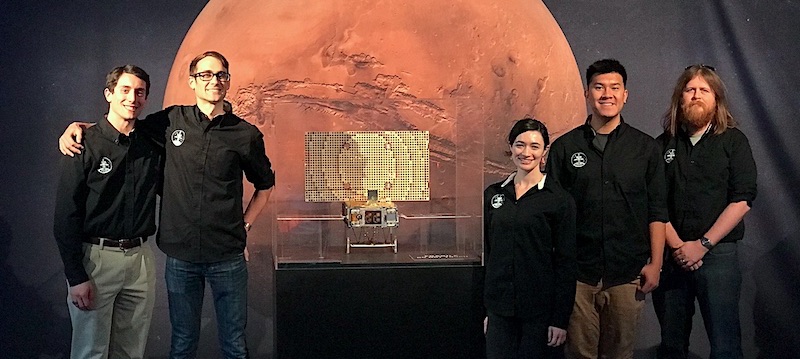

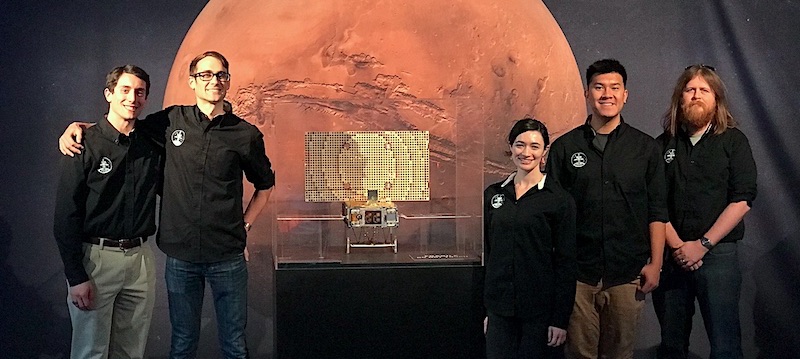

:: MarCO Flight Operations Intern

#python

#js

#node

#databases

#cubesat

#data visualization

I interfaced the JPL operations team with the operations team at Cal Poly, in addition to my day-to-day duties which include drafting and verifying uplink products, analyzing telemetry, and developing data management tools for streamlining and automating spacecraft operations.

Jun 2017 - Sep 2017

:: HEDP Program Summer Intern

#python

#opencv

#computer vision

#image processing

#hpc

I interned at LLNL working on the Film Scanning and Re-Analysis Project. My research project was to design a semi-automated image processing pipeline to measure the size of the shockwave from a nuclear blast. The pipeline I developed provides was developed in Python using the OpenCV library and can detect and accurately measure the shockwave and fireball with minimal user input.